Table of Contents

- What counts as over-disclosure in incident footage?

- When face anonymization can be narrowed?

- Practical workflow to prepare a clip without over-disclosure

- Scope and limitations to set with stakeholders

- Release scenarios and redaction targets

- Quality assurance checklist for technical teams

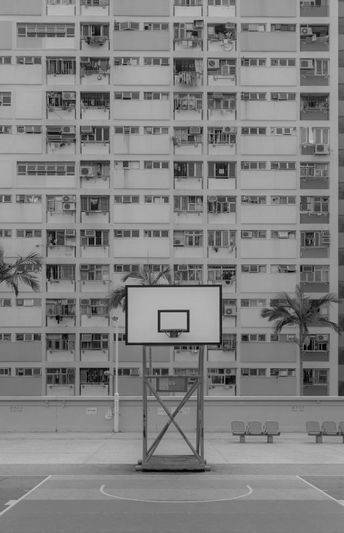

- FAQ - Public Housing Incident Footage