Table of Contents

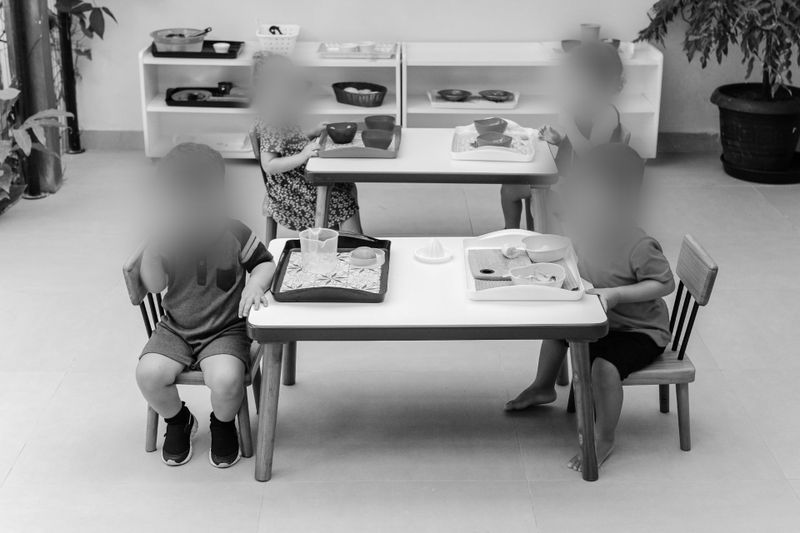

- Why parent access to daycare CCTV creates specific privacy risks?

- U.S. legal context to keep in view

- A practical 5-step workflow for sharing daycare CCTV with parents

- What “good enough” looks like for daycare contexts?

- Scenario planning for U.S. daycares

- Tooling that fits daycares - with clear detection limits

- How teams usually operationalize privacy-by-design

- FAQ: Parent Access to Daycare CCTV in the U.S.